You Can’t Punch the Algorithm, So You Buy the Organic Body Wash Instead

How to be a consumer like it's 2026

We walk around with this lingering illusion that someone is ultimately in charge.

We imagine a pristine control room atop a glass high-rise, where “serious adults” stand ready to hit the emergency button the moment things go off the rails.

Eventually, we grow up and realize those very adults are just staring up at someone else, waiting for them to make the move.

We’re watching a steady accumulation of events that would have once been unthinkable, now arriving with such regularity that they’re processed less as global shocks and more as items in a feed you thumb past while waiting for your oat milk latte.

We have a military operation in Iran, followed by a headline about the Epstein files. A military push into Venezuela, and then, the Epstein files. The killings of Renée Good and Alex Pretti, eclipsed by the Epstein files. A war grinding on in Ukraine, a humanitarian catastrophe in Gaza that has dropped any pretense of being temporary, the U.S. exiting sixty-six international institutions... and through it all, the persistent, unresolved static of, you guessed it, the Epstein files.

Each one deeply consequential, each one unresolved, all of them simultaneous, and somehow, all of them normalized.

Not because any of this feels normal, it very clearly doesn’t, but because nothing ever really ends anymore. There are no conclusions, no moments of accountability, just a relentless displacement by the next crisis.

And this is where the math gets dangerous.

Ray Dalio has spent decades studying the mechanics of how world orders start wearing out, and his conclusion is worringly straightforward.

Empires don’t collapse because the citizenry suddenly loses its moral compass. They collapse when the social contract is voided. They fall when people stop believing the government will regulate fairly, when the markets feel irreparably rigged, and when institutions act less like pillars of society and more like extraction machines.

In other words, empires collapse when their legitimacy finally erodes to its hollow core.

Are we there yet?

For the longest time, we relied on the illusion that someone, somewhere, was managing the macro-level chaos so our brains didn’t have to. And when that fiction shatters—when we realize we are completely exposed to a wildly complex system that views us as nothing more than data points—our nervous systems understandably look for an escape route.

But what do we do when we realize we have absolutely zero leverage over the macro? We can’t fix inflation, we can’t halt a war, we can’t punch an algorithm. That anxiety has to go somewhere, so it reroutes violently downstream, and we frantically grab for the micro: our habits, our daily routines, our purchases - the few remaining things we can actually touch.

This is why we used to blindly toss the cheapest body wash into our carts, but now we stand frozen in aisle four, squinting at a label, asking: “Is this the full price? Who or what else am I funding?” It is nothing more than an attempt to buy a twelve-ounce bottle of moral absolution, a way to soothe a nervous system trapped in a society that has entirely lost the plot.

This mismatch—this desperate need to control the micro when the macro is lost—explains exactly how we arrived at our current cultural moment. It is why we are now deeply, almost spiritually, concerned about whether AI is “drinking our water,” yet we can barely sustain a fifteen-minute conversation about the fact that we are building synthetic truth-machines in a society that no longer agrees on what truth is.

Let’s be clear: data centers do consume massive amounts of water from fragile places. It is a real ecological crisis. But the sheer scale of the public discourse isn’t proportional to the metric; it’s proportional to our desperate need for accountability.

We can’t picture algorithmic bias. We can’t hold surveillance capitalism in our hands. But water? Water is wet. We can imagine a river running dry. In a digital economy where human exploitation is neatly flattened into “efficiency,” water is the last piece of reality we haven’t outsourced to the cloud.

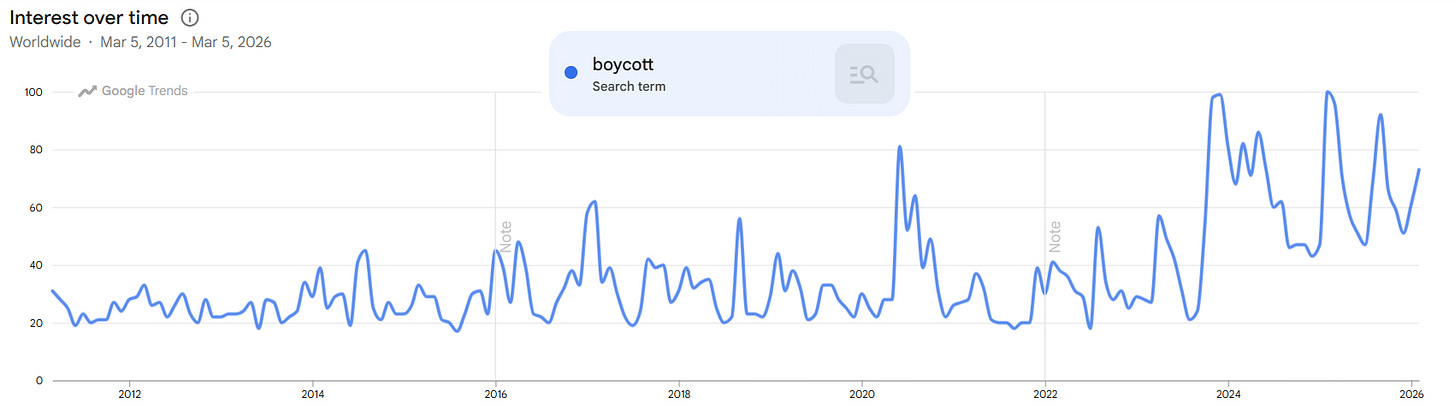

This same reflex—the desperate grasp for the micro—is exactly what is driving the current backlash against OpenAI and pushing Claude past ChatGPT in the App Store.

When the opportunity to exert even a fraction of control presents itself, we take it. It is a necessary rebellion of the exhausted. It is why we are auditing our tech stacks, canceling our subscriptions, and severing ties with platforms that view us merely as inventory.

When the cynics insist that these isolated choices are just drops in the bucket, they conveniently forget that drops are precisely what carve canyons.

History is littered with the hubris of arrogant institutions that believed they were immune to a collective of human beings who had simply decided they’d had enough.

We are finally realizing that the definition of consumption has expanded.

Consumption is no longer a passive act of receiving: attention is consumption, a click is consumption, and the mere act of “engaging” is an active transfer of wealth. We are learning to treat our focus the same way we treat our capital: as a finite resource that must be spent on purpose.

We don’t stand in aisle four and buy the organic bath wash because we harbor some delusion that it will fix democracy. We buy it because, in a world where institutional trust has evaporated, our shopping carts and our screen time are the only arenas left where we can practice moral clarity without filing paperwork or getting tear-gassed.

If 2026 becomes the year of the ethical consumer, it won’t be because we all suddenly ascended to some elevated state of enlightenment. It will be because living inside unresolved, macro-level chaos forces the human nervous system to crave coherence wherever it can find it.

So, we do the only thing left to do...

We pull the levers we can reach.

Pull them hard.

"our shopping carts and our screen time are the only arenas left where we can practice moral clarity" very true! Thanks for spelling it out